We've all been there.

That panic when a Google algorithm update rolls out, and it isn't in your favour. Or maybe it's when a pandemic hits and nobody wants to travel, causing demand to plummet?

It's 2020. Who knows.

In these situations, one of the best things you can do is first take a deep breath.

And then, second, start diving into the data to find where the drop has occurred and then begin creating a plan to fix it.

Read on for an actionable guide on analysing and fixing a drop in organic traffic.

First, figure out the cause.

Your first step is diagnosing what caused the drop in organic traffic.

The usual culprits tend to be:

- You've messed up something technical on the site

- You haven't provided enough value for users, and Google's released an algorithm update to replace you with sites that have

- Demand has dropped for your product/service

- People have stopped searching for your brand as much

- Your tracking is broken

We need to start by ruling out some potential causes.

Why is this the first step?

Individually ruling out possible causes is essential as it prevents false positives.

On more than one occasion, a site I've been working on has had their tracking break, or a technical issue show up at the same time as an algorithm update.

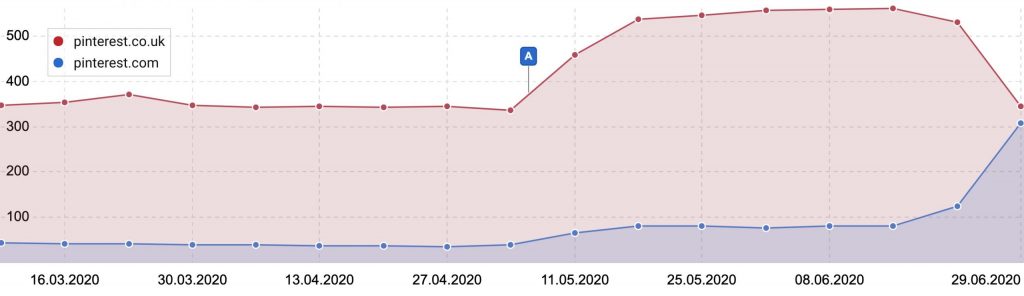

Even with my last algorithm update analysis, I found Pinterest had dropped significantly.

Only to later find all that traffic was going to the .com site, potentially highlighting an issue outside of the update.

Below are some steps I'd recommend running through to figure out what is going on.

Check your tracking works.

This is the first thing you need to rule out.

If you're a Google Analytics pro, you can just visit a few URLs on your site, and check the Google Analytics or Google Tag Manager code is in the HTML.

Here is an example of what you should see in the source for GTM (dataLayer code is optional).

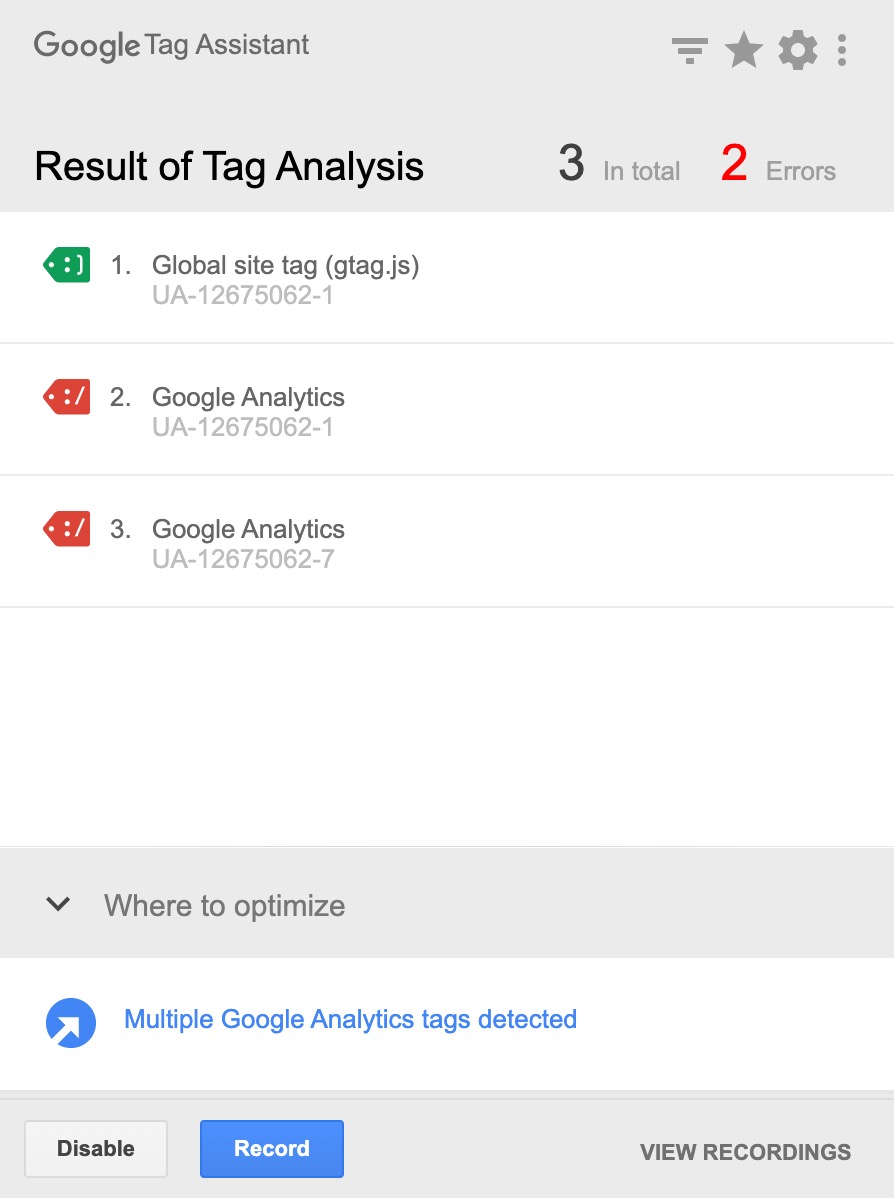

Another method is by using the tag assistant extension for Chrome.

Once installed, enable it, refresh your page and see the results.

You should see one pageview request going to your Google Analytics property. If you see two, you may also find your bounce rate is low, and you need to fix your tracking.

No tracking issues?

Let's move on.

Check it's not a technical SEO issue.

Next, let's check nothing has broken on the site.

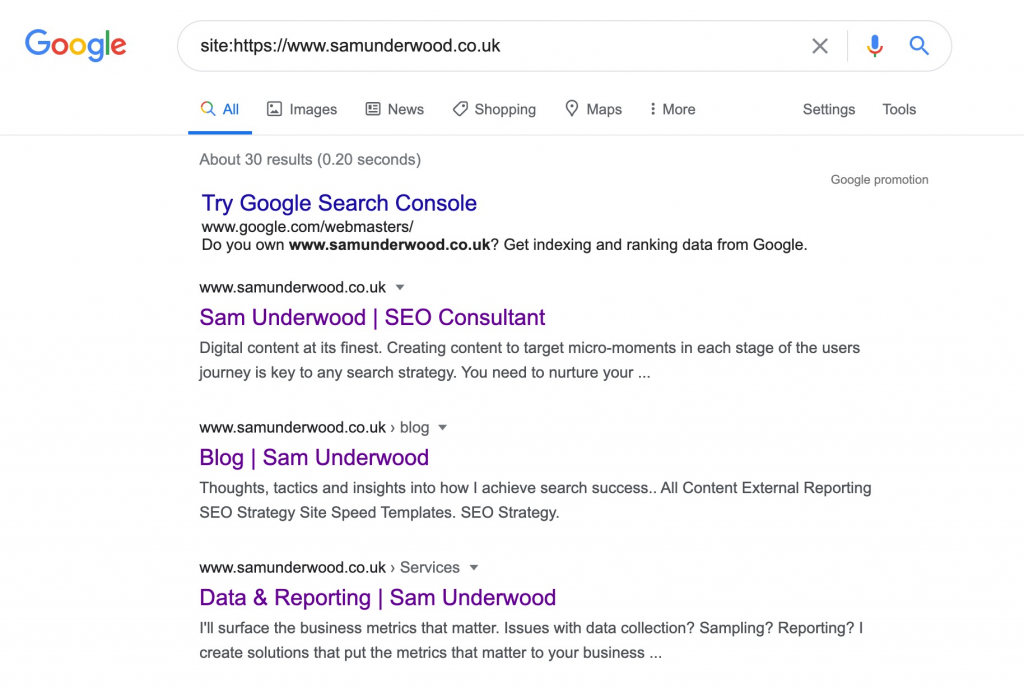

A quick first check is performing a site:domain.com search.

If crucial pages are missing from the result, or there is messaging about URLs being blocked from crawling there may be a technical issue. This will likely be because of:

- A noindex tag in the

<head>of the page or in HTTP headers. - Robots.txt rules not allowing crawling

Check whether a noindex is preventing indexing.

To quickly check for a noindex, I'd recommend installing a chrome extension such as SEO peek.

Once installed, you can see HTTP headers as well as any tags that may be causing the issue.

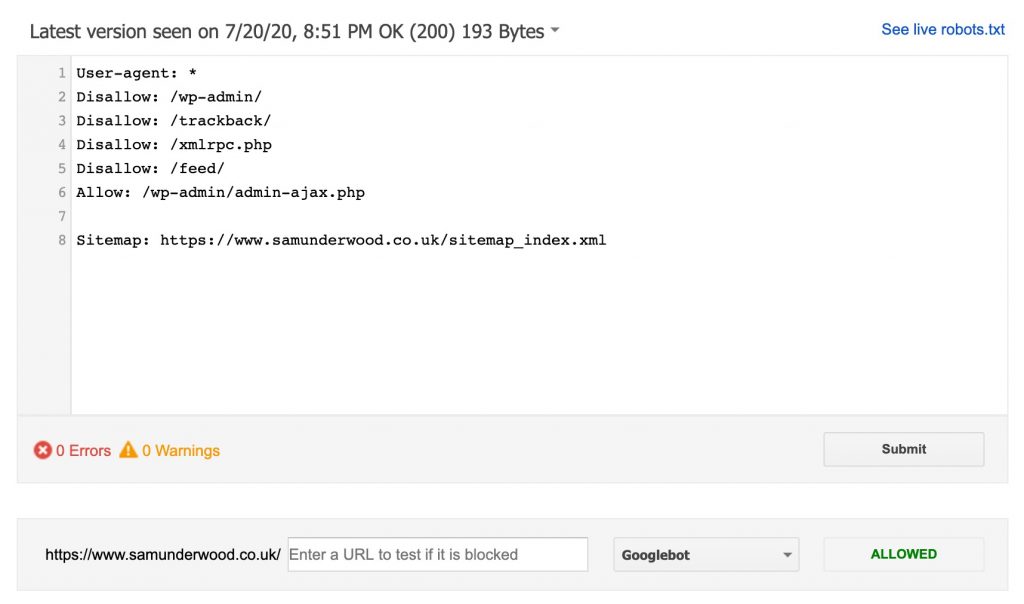

Check whether the robots.txt is preventing crawling.

If you want to check for a robots.txt issue, I'd recommend using the Google Search Console (GSC) robots.txt testing tool and entering a few different URLs to see what it says.

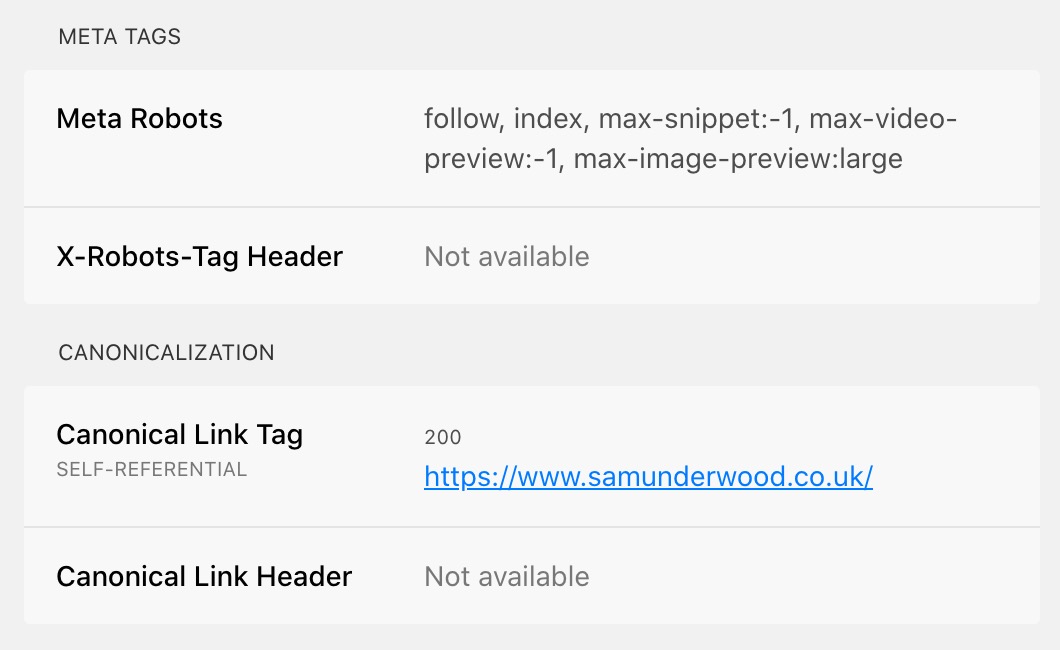

Check for issues with canonicals.

One common technical SEO issue is with canonical tags not correctly referencing the right page.

If you find it is an issue with canonicals, audit them URL by URL and see if you can spot a pattern.

Sometimes it can be something simple like they're referencing pages incorrectly or they include parameters when they shouldn't.

Other potential technical issues.

Ultimately, the list of potential tech issues could go on. You could be having problems with:

- International targeting

- Redirect issues from a migration

- Lack of parity between your mobile and desktop site

- Internal linking changes reducing equity to essential pages

- 404s on pages with considerable link equity

- And more

To entirely rule out technical, you'll need to do an audit. If you're skilled in SEO, consider following a technical SEO audit template.

Check for technical issues site-wide.

If you want to check for these issues site-wide, I'd recommend using tools such as Sitebulb or Screaming Frog.

If you want to be alerted next time a technical issues arises, take a look at ContentKing.

Check for algorithm updates.

After I've ruled out both tracking and potential tech issues, I'll usually investigate whether there has been an unannounced algorithm update.

If you aren't on Twitter, my usual first place to check is Search Engine Roundtable for any news.

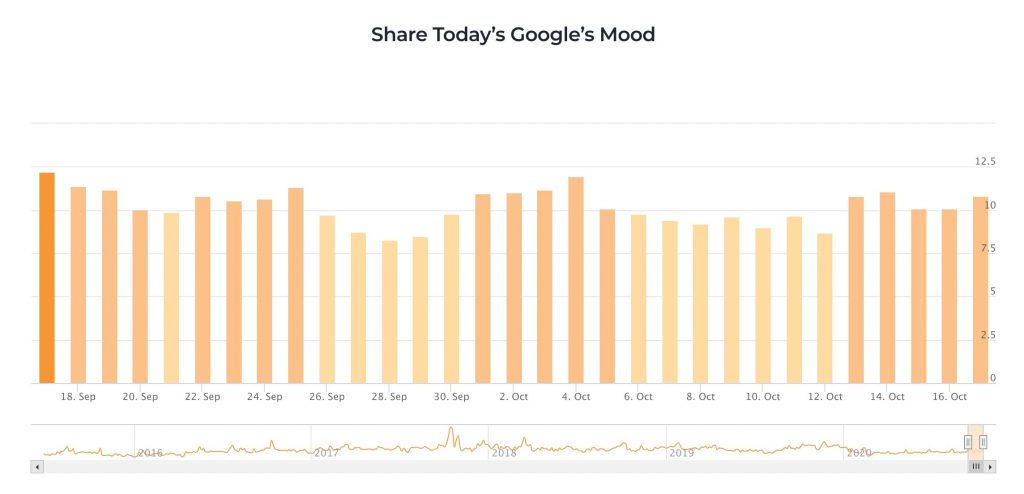

If it's early days, you can also use a variety of tools that monitor search results to measure how volatile they are.

Here are some of the top ones:

- SEMrush Sensor

- RankRanger Risk Index

- CognitiveSEO

- Accuranker Grump

- Advanced Web Ranking

- SERPmetrics Flux

- MozCast

If it is an unannounced algorithm update, jump ahead to the next step, analysing the drop.

There is also the potential here that Google has rolled out a pre-announced algorithm update, and you've missed it. Recent example updates include:

Double-check the Google Webmasters blog to see if there has been an announcement you've missed.

If there has been a pre-announced update you've missed, look into following the guidelines on the webmaster blog to update your site.

Check it isn't demand related.

If we're still coming up empty, it's worth also clarifying the drop isn't caused by a decline in demand for your service.

There are two ways I tend to do this.

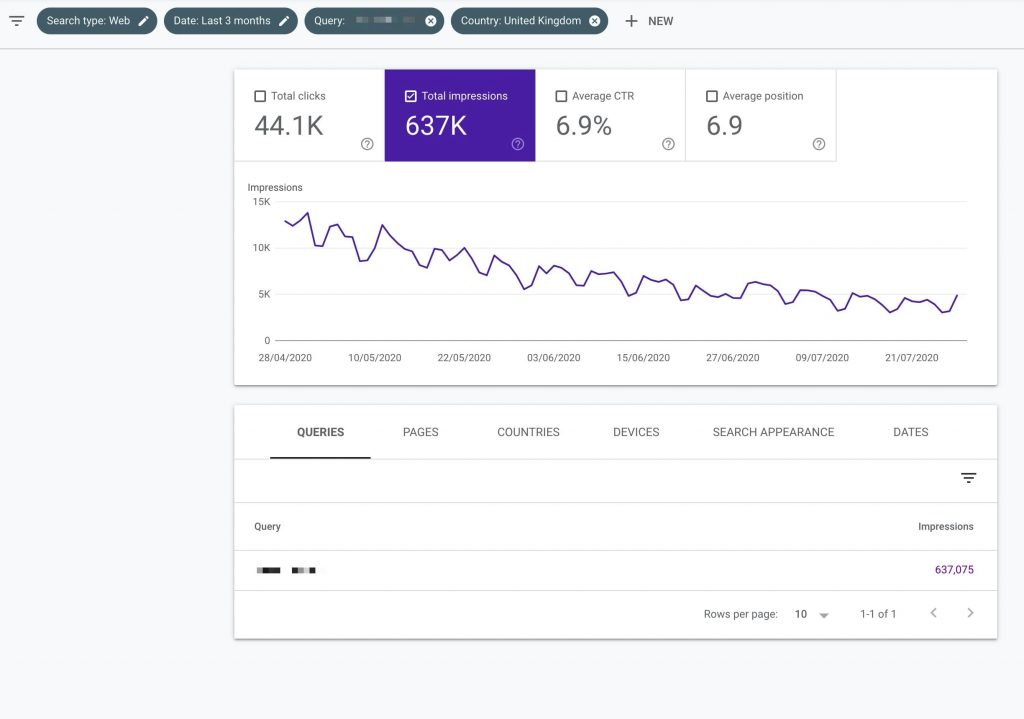

My favourite way to see whether search demand has changed is the impression data you get through GSC.

Impression data shows how many times you've been seen in search results, making it a great way to measure the number of searches for a query.

What I usually do here is pick out a few head terms to see if impressions have changed significantly over time.

In this example, demand has been declining for months, so this would explain a drop in search traffic.

I'd also recommend doing some year over year comparisons within GSC to see if the demand change is seasonal.

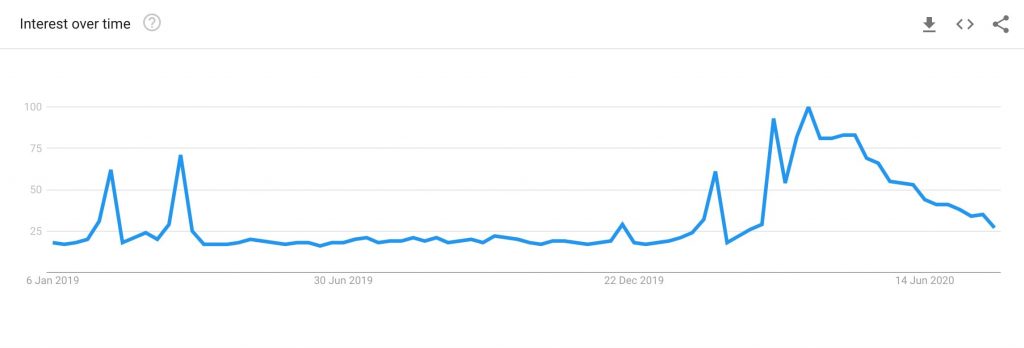

If you aren't consistently on page one, making impression data inaccurate, the next best place to look is Google Trends.

Simply enter a head term and see how demand in your market is changing.

Check whether you've lost links.

There is the potential that you've lost essential backlinks which is hurting your organic performance.

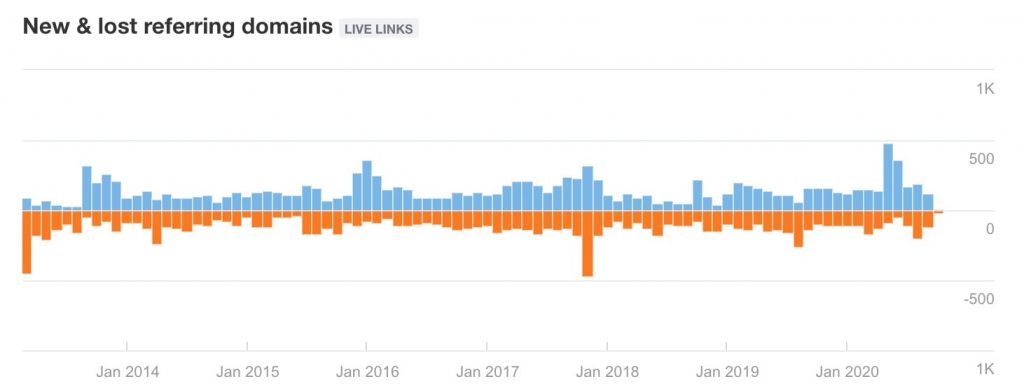

To check whether that is the case, use a tool such as Ahrefs Site Explorer and check the below chart on the overview dashboard.

If it looks like you've had a spike in lost links, investigate further in the lost referring domains report.

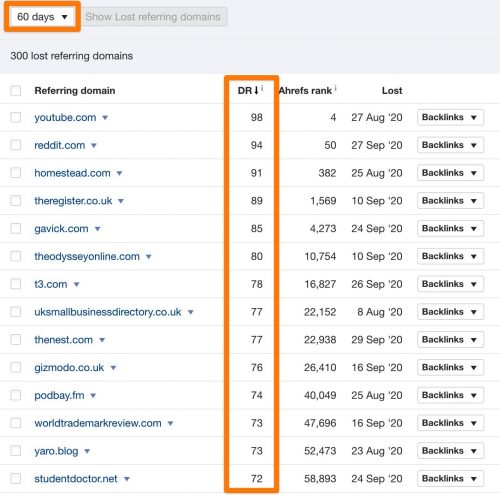

Once in the report, select a long timespan and check for domains you've lost links from that have a high Domain Rating.

Use the Backlinks dropdown to find which page you lost the link from. If the link was relevant and of high-value, consider reaching out to the site owner to try to reclaim the backlink.

And if it's none of the above?

If you've gone through the above checks and come up empty, this points towards a broader SEO issue where your current strategy just isn't working, causing competitors to overtake you.

In this scenario, I recommend going to the next section and analysing the impact, then creating an action plan.

Analysing the impact

In some scenarios, further analysis is needed outside of just spotting the issue.

If the cause of the drop is technical or tracking related, the solution is as simple as fixing the issue.

If it's demand related, there isn't much you can do with your SEO to quickly counteract that. You more need to focus on marketing tactics you can use to generate demand.

However, if you've seen a gradual decline or you've been impacted by an algorithm update, you need to understand where the drop has occurred before you create an action plan.

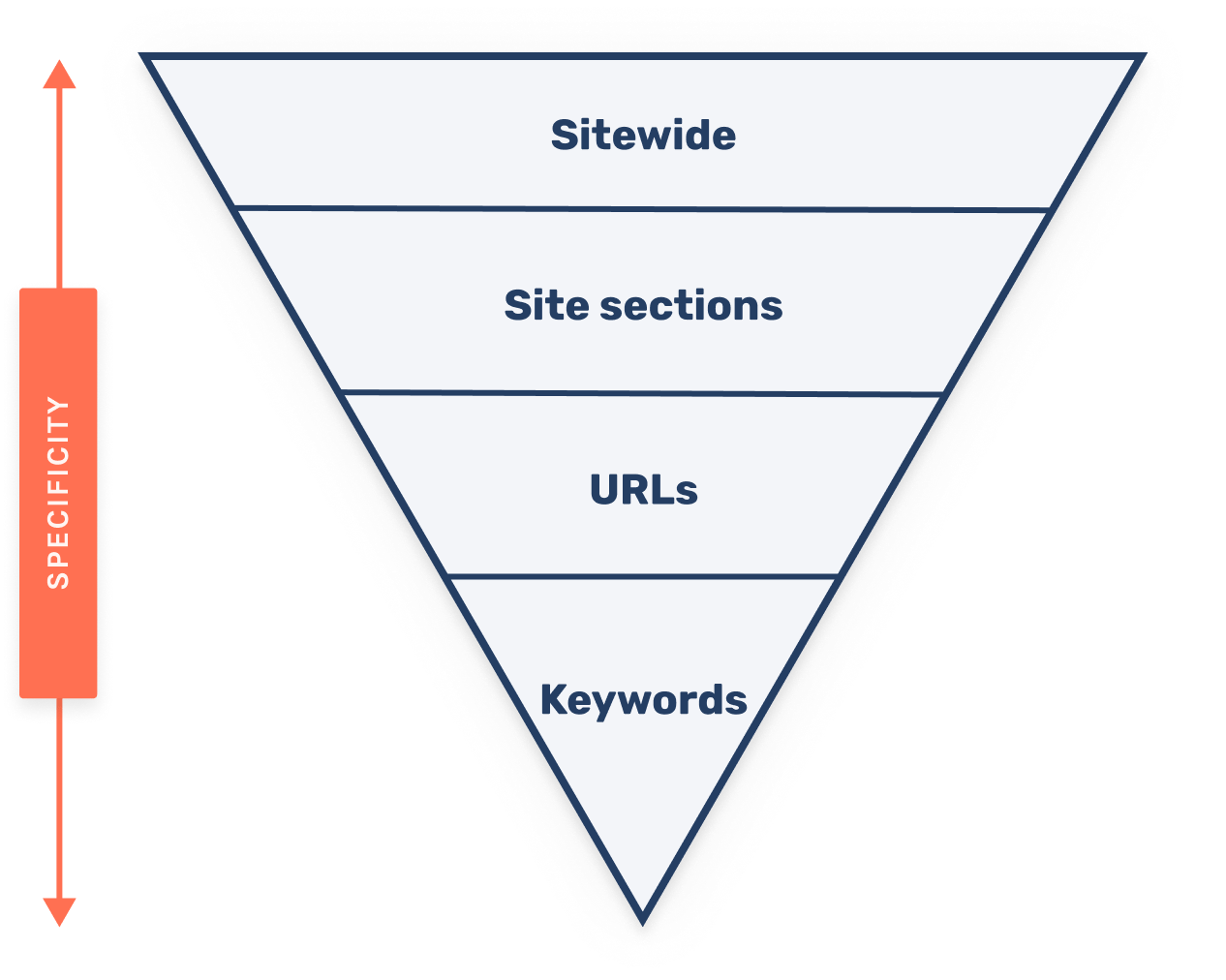

Analysing the drop by specificity.

There are four different levels of specificity I figure this out on.

- Sitewide: Obvious one, the overall impact to organic traffic

- Site sections: Which areas of the site are impacted the most?

- URLs: Which URLs are affected the most?

- Keywords: Which keywords are affected the most?

By using this model, you can quickly understand where to focus and prioritise the action plan.

Outside of narrowing down in this way, it can also be useful to get data by:

- Device

- Country

How to do the analysis?

There are two main ways I measure the impact of drops.

One is through Sistrix, and the other is by using GSC.

I tend to use GSC for sites when I've got access, Sistrix is my choice when I don't have GSC access.

Using Sistrix to analyse a drop.

Whilst I like Ahrefs, SEMrush, and other monitoring tools. At the moment, Sistrix is my favourite tools for quickly seeing ranking changes.

This is because Sistrix:

- Makes it easy to break down changes thanks to its directories feature

- Has a ranking changes report that makes it easy to pinpoint changes

- Provides intuitive comparison tools like with its 'SERP compare' feature (shown later)

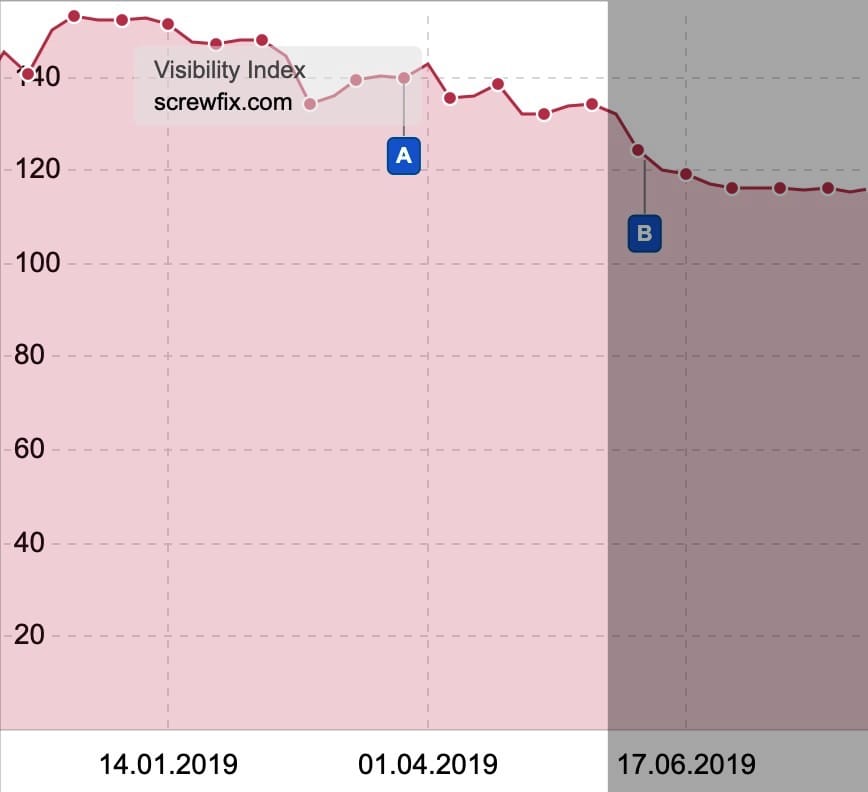

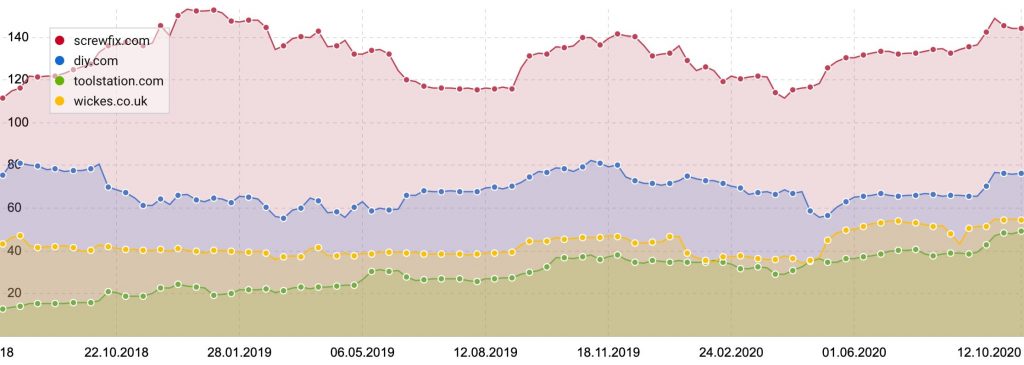

To show you how to do this, I'm going to run through a drop analysis with Sistrix for Screwfix where visibility dropped that wasn't due to a known algorithm update.

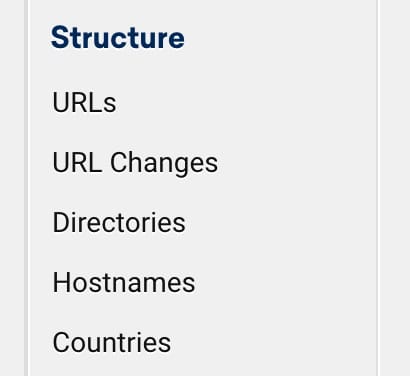

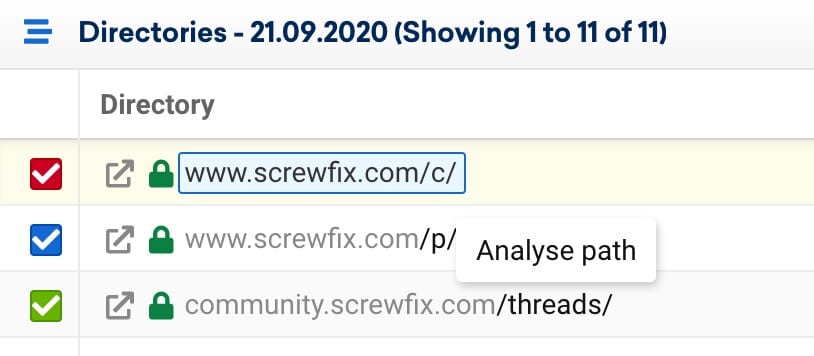

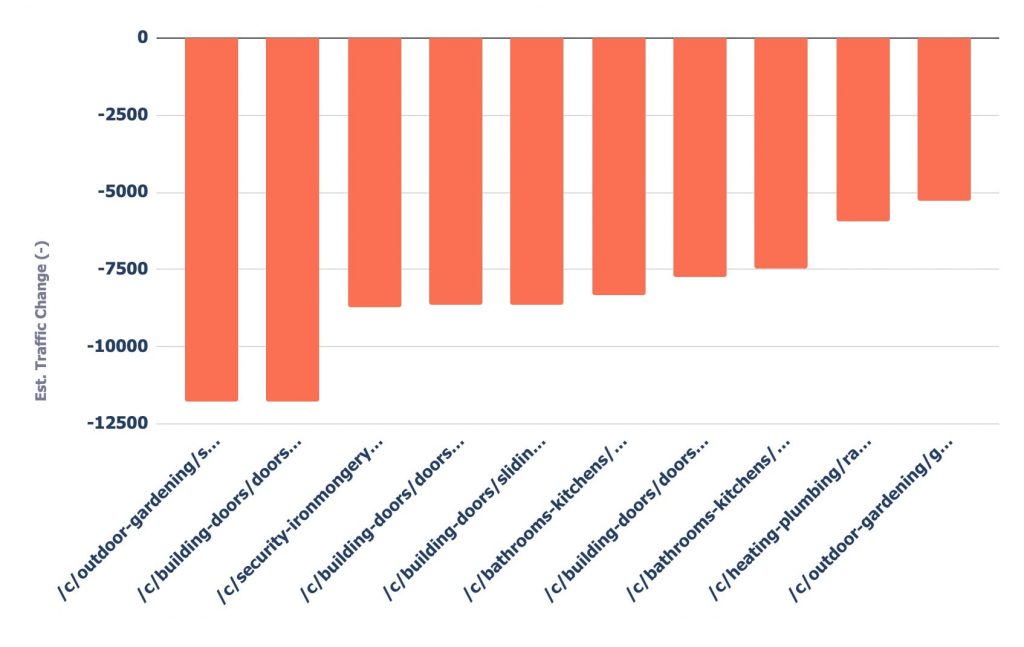

From the above we can already check off the sitewide view of the drop, so next head to the 'Directories' area from the sidebar.

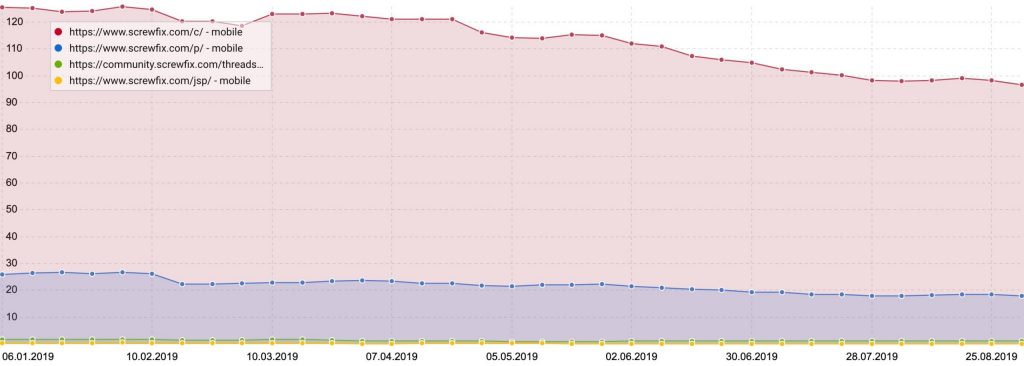

When you do this, Sistrix shows the visibility index again, but this time split by each directory of the site.

If you've implemented a good URL structure, you should hopefully now be able to see which section of the site has been impacted.

In this case, it's the /c/ directory which contains Screwfix category pages.

In the table below the chart select the /c/ directory and analyse path.

At this point, you can analyse URLs within the /c/ directory by heading back to the directories section in the sidebar again.

For this example, I want to see all ranking changes within the /c/ directory, so I'll stay there for now.

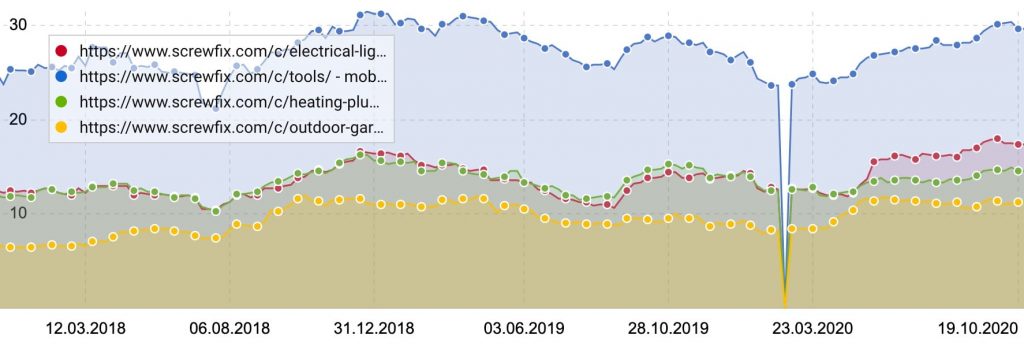

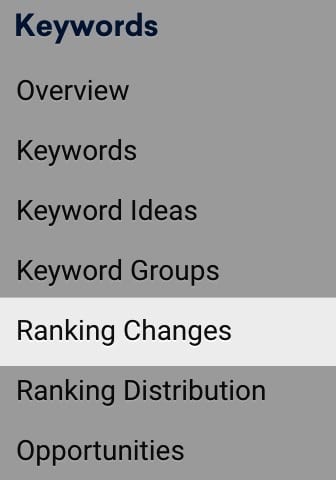

Head to the ranking changes report in the left sidebar.

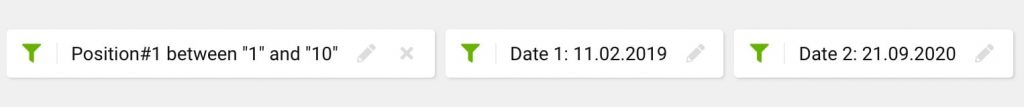

We now want to filter for the dates we've seen the drop in traffic.

I also usually add a position filter based on the 'from' date.

This is handy if you want to see what keywords have dropped that are traffic driving. These are the ones that are going to have the most considerable impact on Sistrix's visibility metric.

If you want to, tweak the filters more to see keywords that were previously in the top 3 and have decreased.

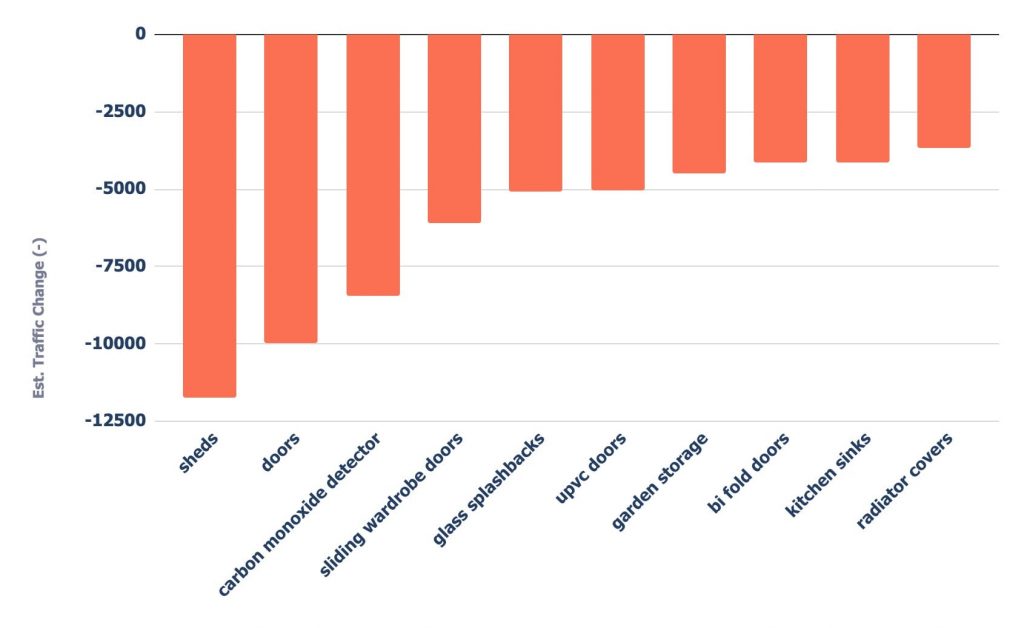

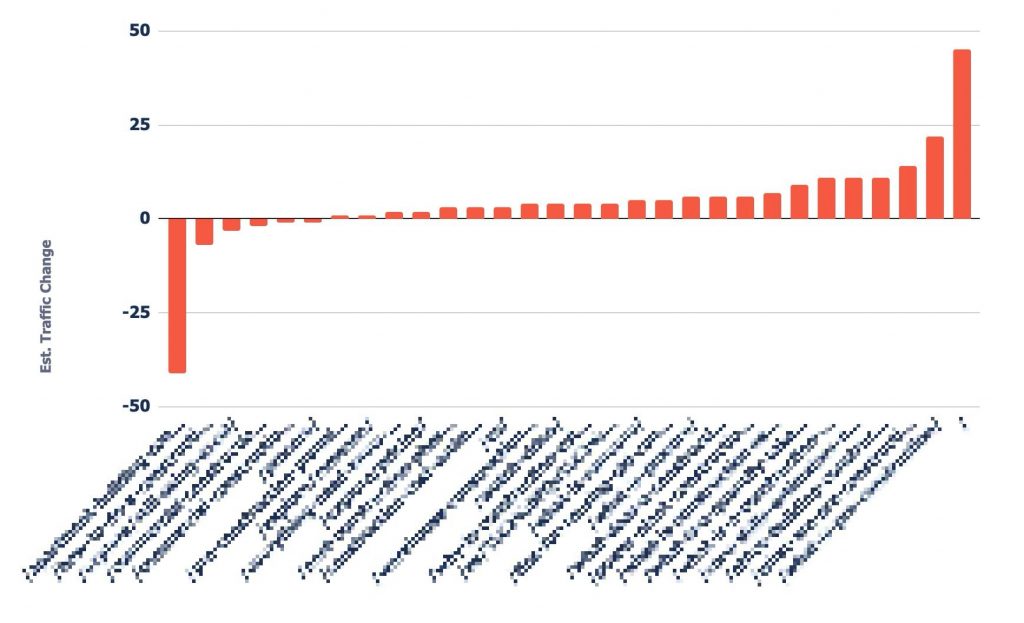

We've now got a list of keywords dropping alongside the associated URLs. Next, let's figure out which keywords and URLs have seen the largest drop.

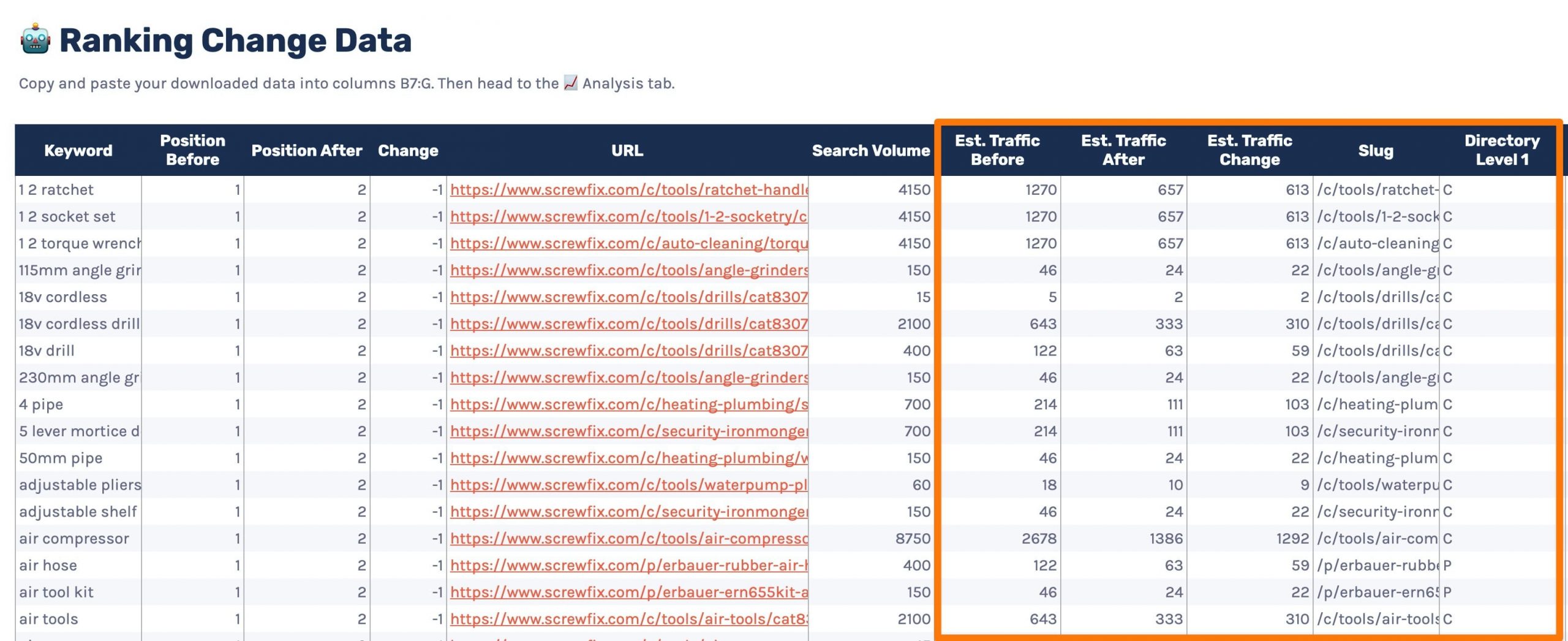

We'll be taking data outside of Sistrix to accomplish that. Use the template below (making a copy via 'File > Make a copy') and follow along.

The first step is to download the data from Sistrix.

You'll need to copy and paste the columns up to the 'Search Volume' column into the template.

Once you've copied the data, it should look like the below.

I've highlighted above some columns that will automatically fill in based upon some formulas. Make sure not to delete anything in these columns.

Head to the '📈Analysis' tab and see the results.

There is a handy dropdown at the top where you can pick an option on how you want to view the data, as well as an input for limiting the results returned.

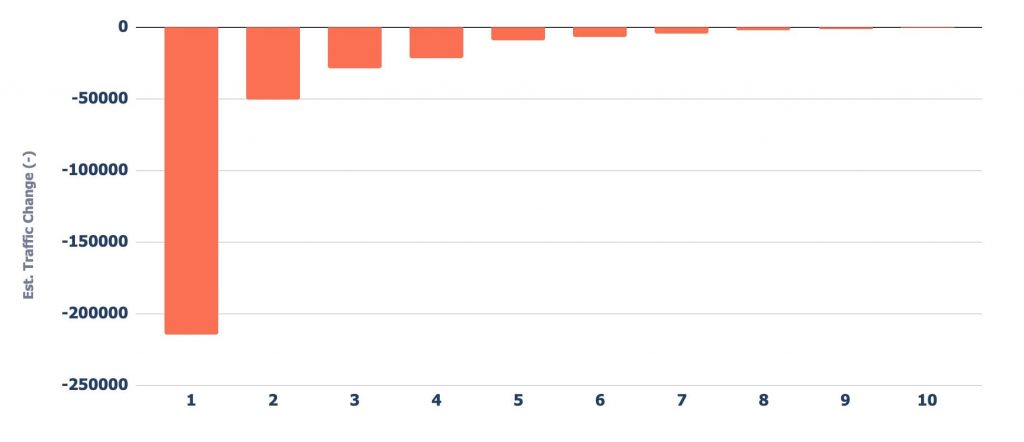

The previous position option in the dropdown allows you to see from which position you dropped from caused the largest impact to traffic.

As you'd expect, the drops from keywords Screwfix ranked higher for impacted them the most.

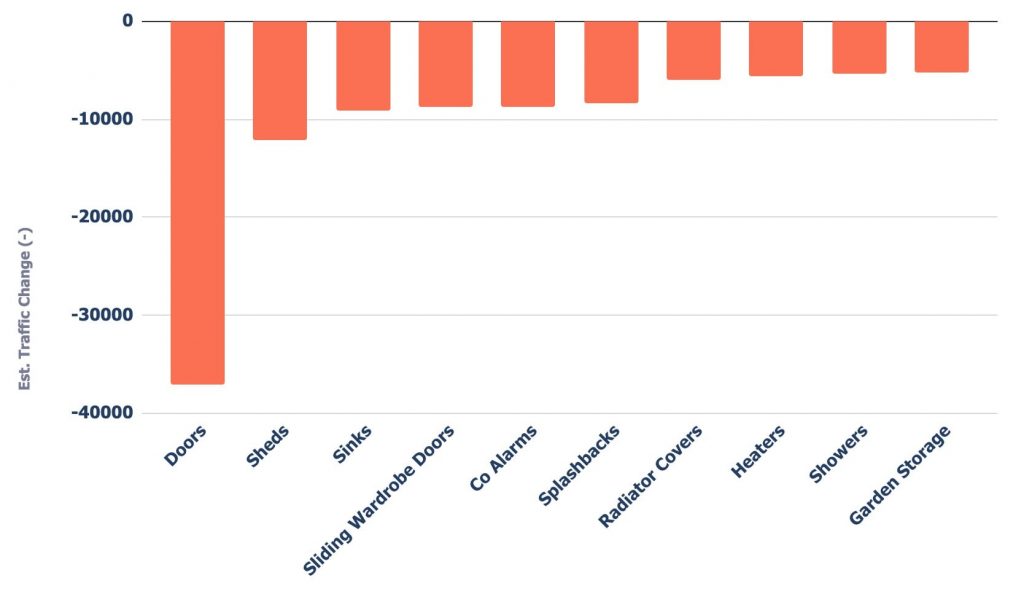

You can also see the drop by different sub-directory levels (3 levels deep by default).

As well as by each URL individually.

Any by every keyword.

But what if you don't need to use third-party data to do the analysis? In that case, I'd recommend using Google Search Console.

Using Google Search Console to analyse a drop.

Google Search Console is my preferred way of analysing organic traffic changes as you get keyword-level data.

If we were using Google Analytics, our analysis would stop at the URL level of the specificity process I went through earlier.

We'll first go through analysing in the frontend, then I'll show you how you can take things further with the API.

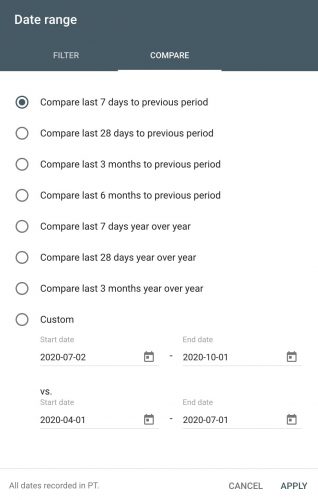

You'll first need to head to the performance report in the left sidebar.

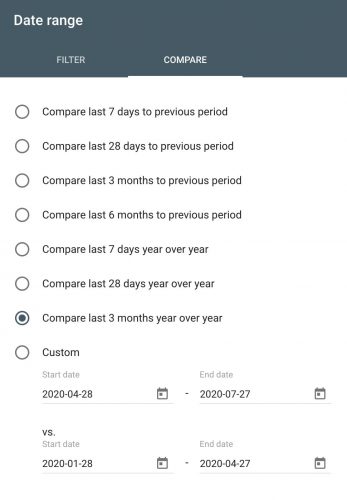

Once there, it's as simple as choosing the dates and heading to compare:

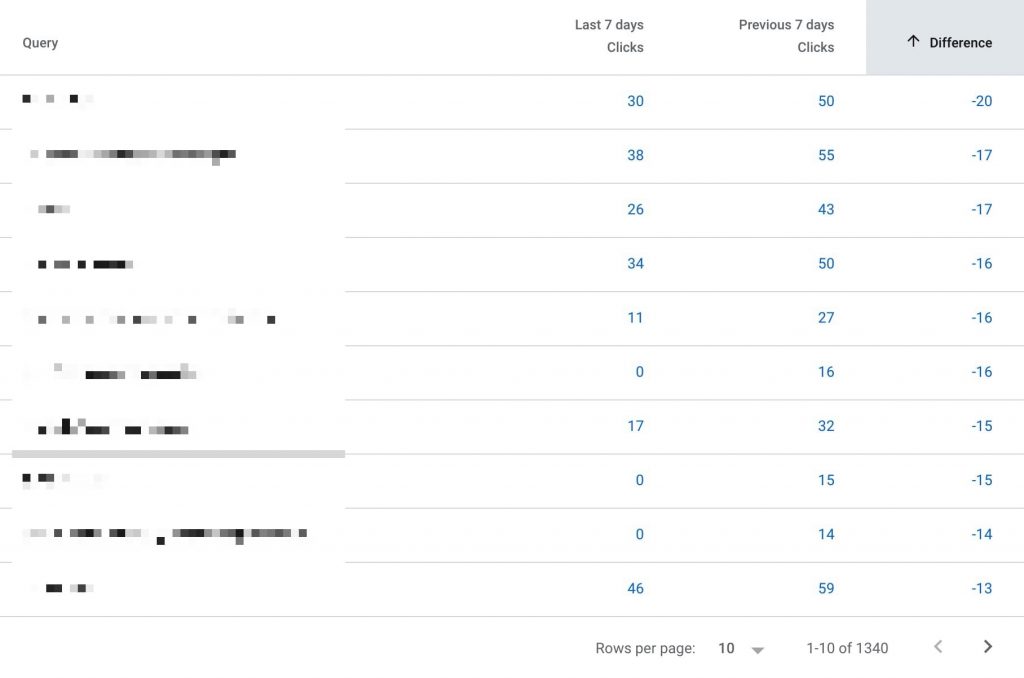

Once you've done that, select one metric to compare. In this case, I'm looking for traffic changes, so I've chosen clicks.

Then use the difference column in the table below to sort by significant decreases/increases.

Using the tabs above the table, you can view the largest changes by page, country, device, and search appearance.

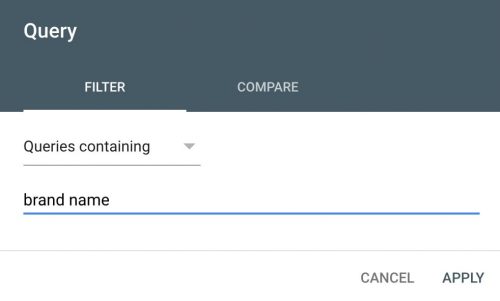

Thanks to the keyword-level data, you can also filter by query.

By filtering by brand name, you can evaluate whether the change in traffic is due to non-brand or brand traffic.

After you've filtered for your brand name, look at the scorecard metrics to see how much of the traffic change was due to brand.

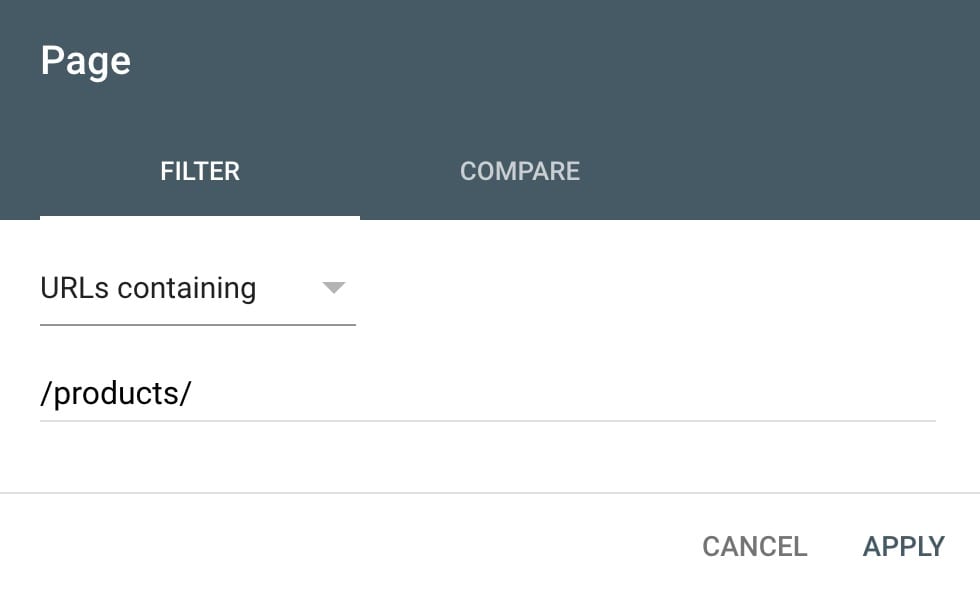

If you want to analyse a specific directory, add a 'containing' page filter for the directory you want to investigate.

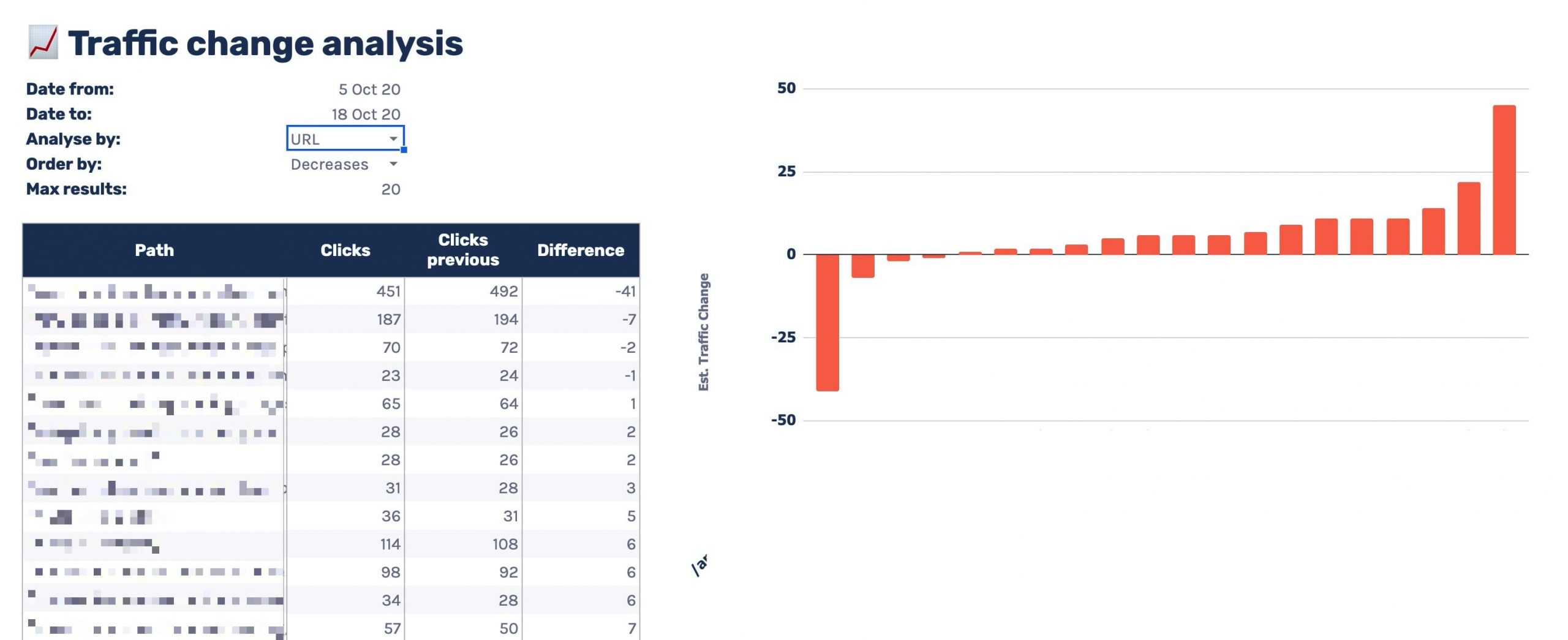

Using my GSC traffic change analysis template

While the frontend is great, I like bringing data into Google Sheets to make checking drops and increases quicker.

The template is similar to the Sistrix one.

You can see it's got a variety of features including:

- A customisable date range, the comparison default to the previous period

- Multiple data grouping options, showing you drops/increases by:

- Keyword

- Position

- URL

- Brand/non-brand

- Directory level 1/2/3

- Order data by the largest increases or decreases

- Limit the data returned

First, make a copy of the template linked below via 'File > Make a copy'.

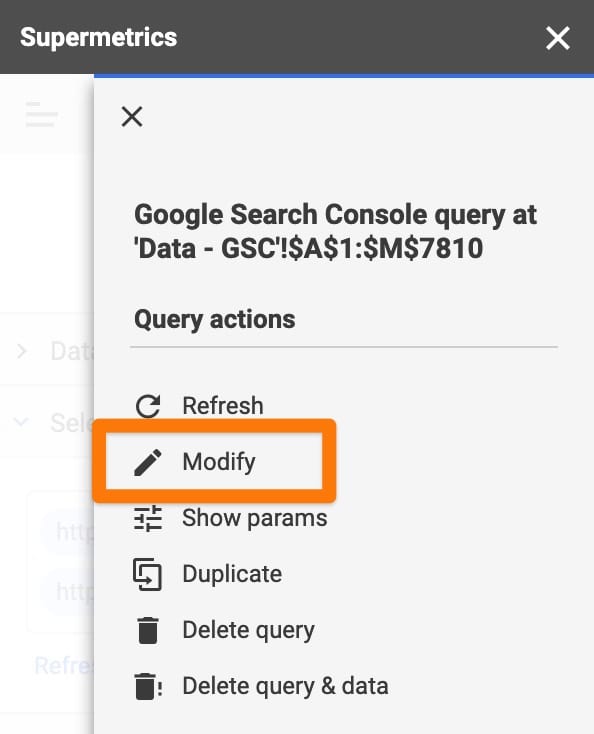

Head to the 'Data - GSC' tab and open up the Supermetrics sidebar via Addons > Supermetrics > Launch sidebar in the top menu.

Select cell A1 and modify the Supermetrics query in the right sidebar.

You'll then need to:

- Select the GSC profile you want to analyse

- Select a date range (if required), it defaults to the 3 months. Remember you'll need a date range that includes the comparison period.

- In the options dropdown, enter your brand name in the brand keywords area

Once done, apply changes and wait for the sheet to load.

Next, configure the '📈 Analysis' sheet to your liking and look over the tables/charts to quickly highlight where traffic changes are coming from.

Creating a plan of action

Now we know where we've seen the impact, the next steps are to evaluate why we think we've dropped in organic traffic.

Enter this part of the process with a mindset of:

- Is your site providing more value than competitors?

- Is there any way you could provide more value?

The plan of action needs to be an evaluation of your SEO strategy and maybe even your business model.

Details of how to do each task is outside the scope of this article. However, here's where I'd start.

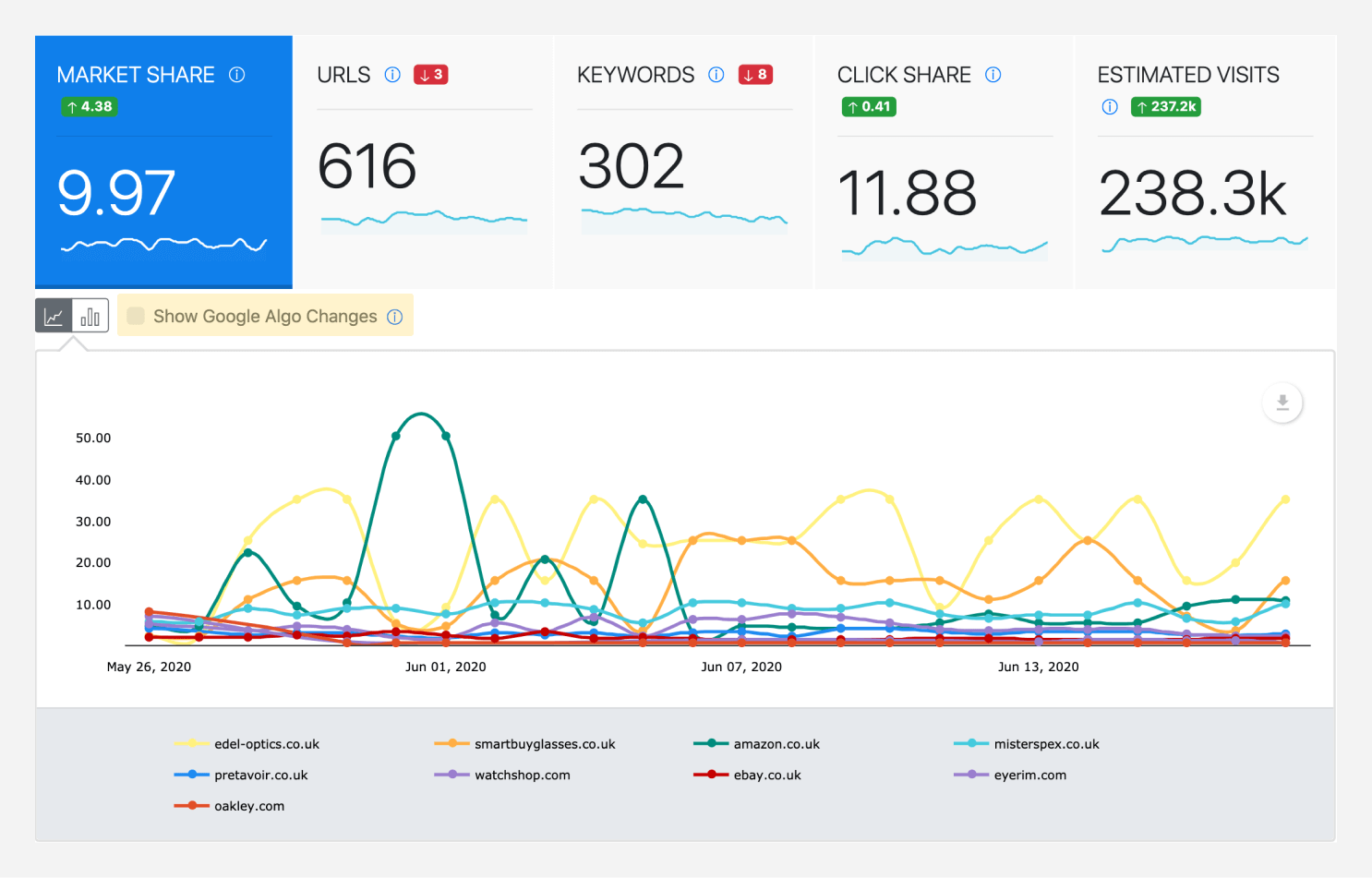

Competitor analysis

If you've been dropping in traffic, someone else has been gaining.

So start by finding whose been taking traffic from you.

If you use a rank tracker, this could be as simple as logging in and checking some charts.

In Sistrix, it's as easy as entering adding some competitors when viewing your own visibility.

Once you've done that, you get a great view of market trends.

But it's important to find who is gaining for specifically the keywords you're dropping for.

Pick out some of the top keywords from our earlier analysis and start with a SERP analysis to see what has changed.

To see which competitor has moved where, you would have needed rank tracking set up before the drop, or you'll need a competitor analysis tool.

Most competitor analysis tools provide historical data; if you're an Ahrefs fan, they provide it via their keyword explorer.

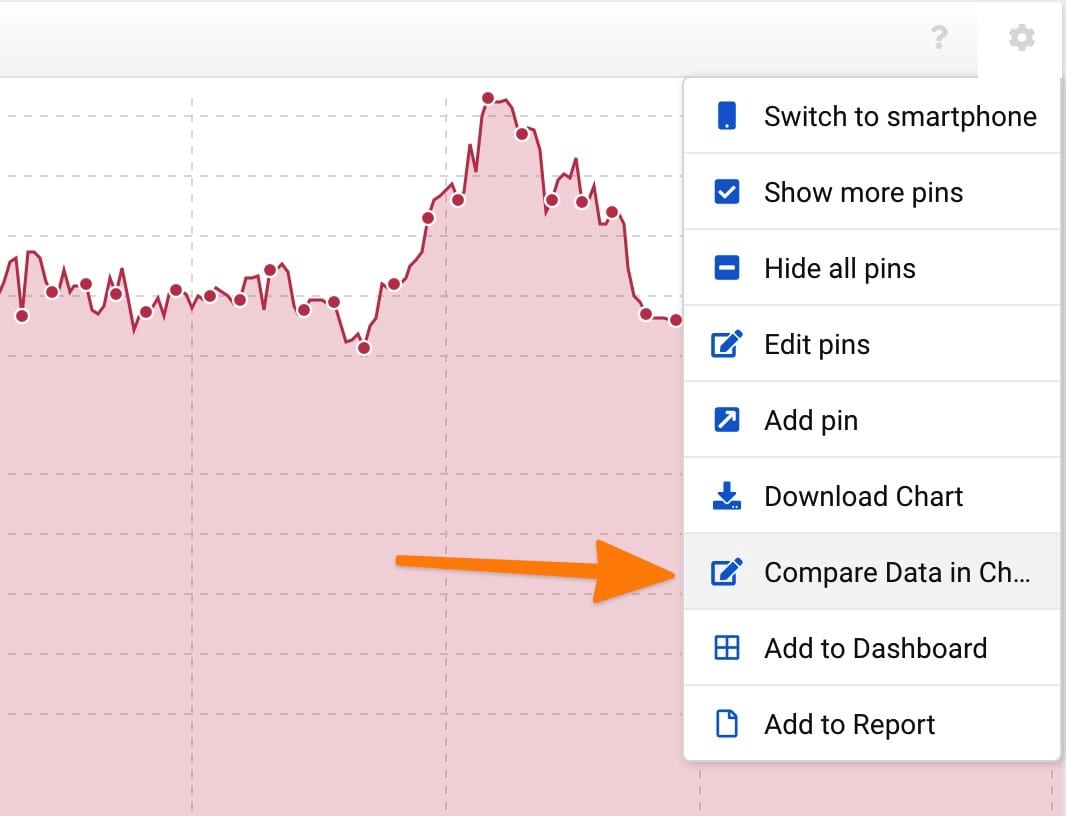

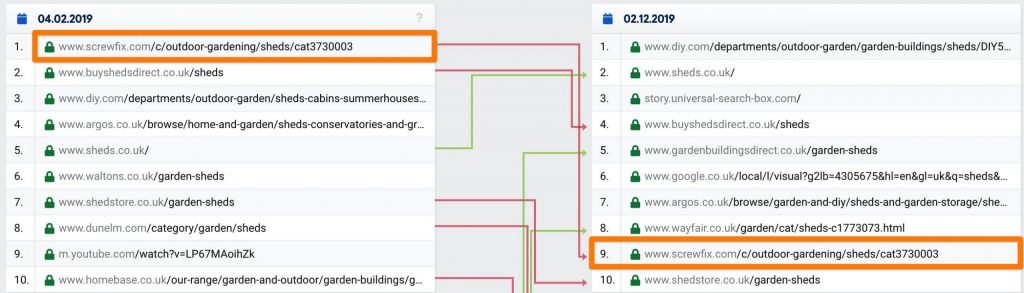

I tend to use Sistrix as they've got some handy features for comparing SERP changes.

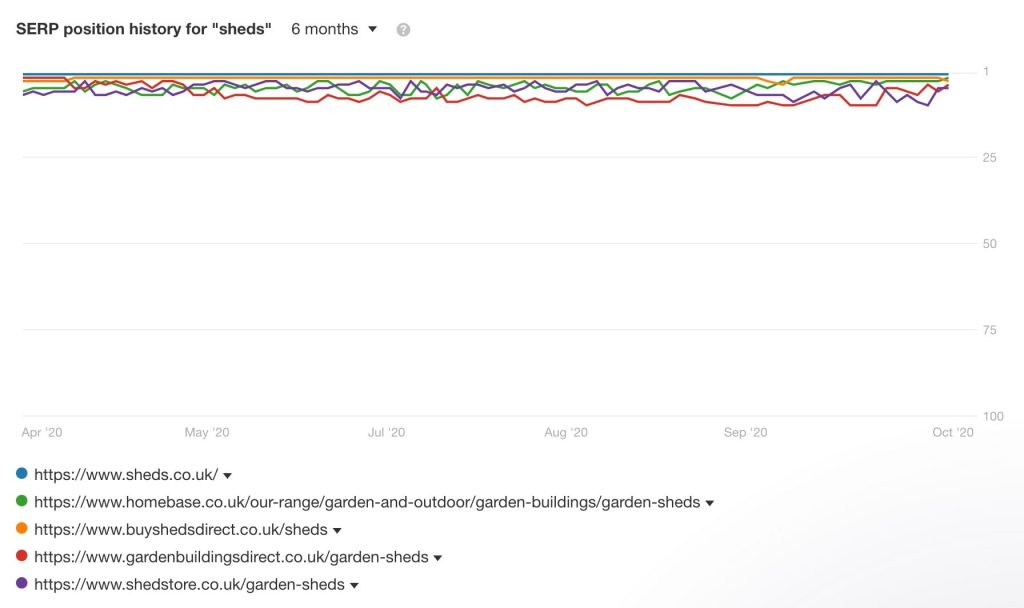

In this example, I'm researching the term 'sheds'.

Head to 'SERP Compare' in the left sidebar and enter a filter for the dates you dropped.

You've now get a view of what precisely changed for that keyword.

Now start making some page-level comparisons against competitors performing better, asking questions like:

- How does the content compare?

- Does this page have better inbound links?

- Is the UX/UI on this page better than mine?

- How is the site internally linking to this page?

- Does the site cover the topic of what the user searches for?

- For example, does it cover keyword variations, common questions, and multiple-intents in an organised way?

Then compare the domains, asking things such as:

- Does this performing better specialise in this product/service offering?

- For example, sheds.co.uk specialises, Argos is a general retail store.

- Does the site have more authority?

- Use metrics from tools like Ahrefs, Majestic, or Moz to compare.

- Is this site a more popular brand?

- Enter the brand names within a keyword research tool or compare using Google Trends.

- Is this site more popular within the niche?

- Use a keyword research tool and combine '[brand name] + [product/service]', in this case 'screwfix sheds' vs 'argos sheds'.

Once you've done this for a few of the dropped keywords, you'll start to get a view of potential reasons for the drop.

Content auditing.

We checked earlier for technical issues, so next start evaluating your content. While assessing, have the mindset that your site is only as good as your weakest pages.

It's best to do this because there is a good chance Google may evaluate your site as a whole.

In general when it comes to quality of a website we try to be as fine grained as possible to figure out which specific pages or parts of the website are seen as being really good and which parts are kind of maybe not so good.

And depending on the website, sometimes that’s possible. Sometimes that’s not possible. We just have to look at everything overall.

John Mueller

From experience, especially when impacted by an algorithm, I find issue areas of a site tend to drag down higher quality areas.

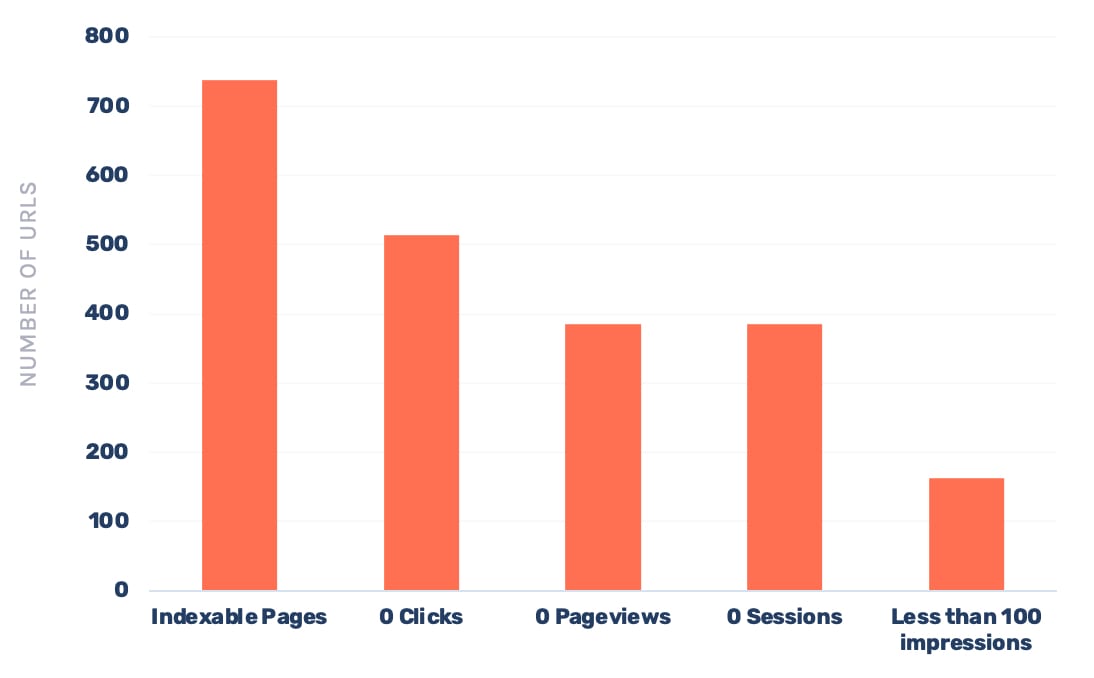

Begin with a site-wide analysis to spot issues with content performance, I do this by comparing the number of indexable URLs vs:

- Google Search Console metrics

- Clicks

- Impressions

- Google Analytics metrics (all channels)

- Sessions

- Pageviews

This analysis allows you to quickly understand whether we have pages on the site:

- With a low search interest

- That nobody navigates to

- That get a low amount of external traffic, both via organic and other channels

In the above example, you can see a large portion of the site receives no clicks from organic, no sessions from other channels, and isn't navigated to internally (zero pageviews).

This highlights a potential issue with the content being uploaded to the site.

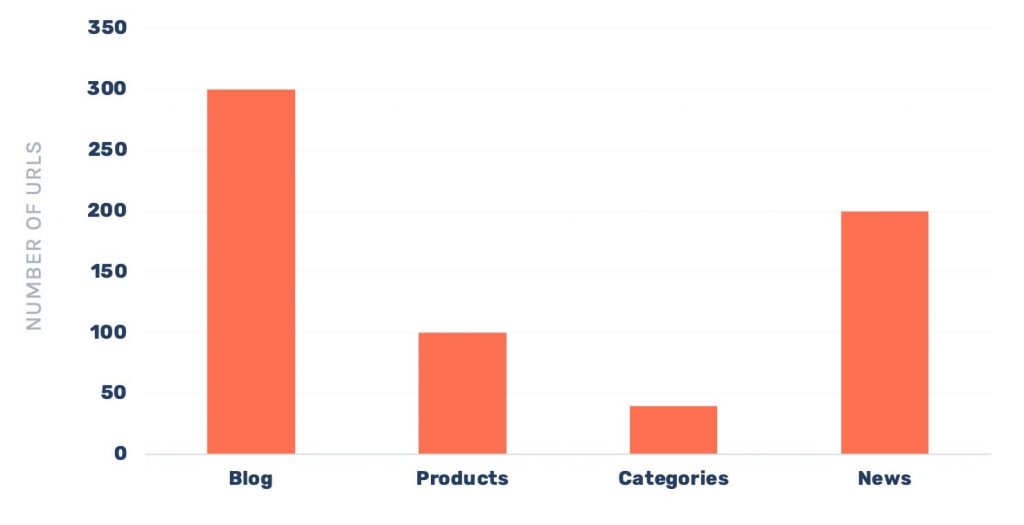

To analyse further, it can then help to look at zero-click pages by site section.

We now know both the blog and news area need some work, and we've got quite a lot of products not performing too well.

You'll now want to go through and evaluate the content URL by URL and make a decision whether to keep, improve, merge or remove each page. With the preference being to improve where it makes sense.

Your decision should be based on a quantitative review of metrics collected about the page, such as:

- Clicks

- Impressions

- Referring domains

- Word count

- Date published/updated

- Ranking keywords

As well as a qualitative review, checking things such as:

- Is the content grammatically correct?

- Does the content cover the topic well?

- Does it link to other useful resources?

- Is it better than competing articles?

- Is the author an expert?

- Is the author clearly shown in the content?

Google themselves has provided more questions you could ask when reviewing, released shortly after the Panda algorithm update.

Content auditing really deserves an article within itself, so it's something I'll cover in the future to provide more insights into how I do it, and also how to speed it up!

Summary

And we're done, you've successfully figured out the cause of a drop, where it's happening and found potential ways to improve your site.

Now you need to begin implementing your strategy and tracking your growth back to recovery.

Hopefully this article has helped, if you have any questions get in touch or tweet me @SamUnderwoodUK.